Artificial Neural Networks

Description:

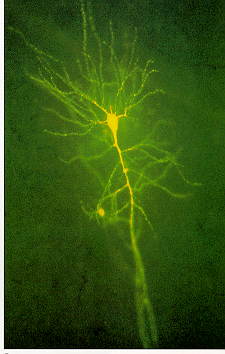

Artificial Neural Networks (ANNs) are information processing models that are inspired by the way biological nervous systems, such as the brain, process information. The models are composed of a large number of highly interconnected processing elements (neurones) working together to solve specific problems. ANNs, like people, learn by example. Contrary to conventional computers -that can only solve problems if the set of instructions or algorithms are known- ANNs are very flexible, powerfull and trainable. Conventional computers and neural networks are complementary: a large number of tasks require the combination of a learning approach and a set of instructions. Mostly, the conventional computer is used to supervise the neural network.

For more information: http://en.wikipedia.org/wiki/Neural_network

Enablers:

1. Research & Development: Mathimaticians, Psychologists, Neurosurgeons,...

2. Applications using artificial neural networks (e.g. sales forecasting, data validation, etc from NeuroDimension).

3. Funding from international institutes ( e.g. IST).

4. New technologies that enable profound research of the human brain activity.

Inhibitors:

1. Outcome ethical issues: Is there a danger developing technologies that might perform similar (thinking) functions as the human brain?

2. Research ethical issues: Is it ethical to perform research and do experiments on the human brain and its functions?

3. Lack of scope and focus: this new technology might create the next information society revolution, thus interest is high and widely spread over several industries.

Paradigms:

1. Simple tasks can already be learned today by artificial neural networks. Further investigation, in the power of those systems as well as in the power of the combination with conventional computer systems, will increase the power of a connected world or the internet.

2. ANNs will disappear as black boxes into our daily lives, supporting us with simple decision making where making a mistake is allowed (children's level). To increase the learning effect and for control purposes, these boxes will be interconnected via the internet.

Experts:

no experts found yet. If you know someone, please add him/her to this page.

Timing:

1933: psychologist Edward Thorndike suggests that human learning consists in the strengthening of some (then unknown) property of neurons.

1943: first artificial neuron is produced (neurophysiologist Warren McCulloch & logician Walter Pits).

1949: psychologist Donald Hebb suggests that a strengthening of the connections between neurons in the brain accounts for learning.

1954: first computer simulations of small neural networks at MIT (Belmont Farley and Wesley Clark).

1958: Rosenblatt designs and develops the Perceptron, the first neuron with three layers.

1969: Minsky and Papert generalises the limitations of single layer Perceptrons to multilayered systems (e.g. the XOR function is not possible with a 2-layer Perceptron)

1972: A. Henry Klopf develops a basis for learning in artificial neurons based on a biological principle for neuronal learning called heterostasis.

1974: Paul Werbos develops the back-propagation learning method, the most well known and widely applied of the neural networks today.

1975: Fukushima (F. Kunihiko) develops a step wise trained multilayered neural network for interpretation of handwritten characters (Cognitron).

1986: David Rumelhart & James McClelland train a network of 920 artificial neurons to form the past tenses of English verbs (University of California at San Diego).

Web Resources:

1. http://www.doc.ic.ac.uk/~nd/surprise_96/journal/vol4/cs11/report.html

4. http://www.dacs.dtic.mil/techs/neural/neural_ToC.html

6. http://www.economist.com/opinion/PrinterFriendly.cfm?Story_ID=1143317: The mind's eye